If you want to understand the collision between artificial intelligence and cybersecurity, consider a thought experiment.

A phishing campaign targets 10,000 employees inside a single organization. A large language model tasked with analyzing those alerts might correctly flag 80% as malicious. But faced with the same logs and the same email on a different pass, it reaches a different conclusion. Now multiply that inconsistency across the thousands of signals flooding a typical security operations center every day.

That’s the core tension at the heart of a $30 million funding round recently announced by Qevlar AI, a Paris-based startup building what it calls an autonomous AI SOC platform.

The round was co-led by Partech and Forgepoint Capital International, with participation from EQT Ventures, and arrives at a moment when the cybersecurity industry is grappling with a fundamental question: can AI actually be trusted to make security decisions on its own?

Two AI engineers walk into a SOC

Qevlar was founded in January 2023 by Ahmed Achchak and Hamza Sayah, both machine learning engineers with overlapping but complementary paths.

Achchak studied mathematics and biotechnology at École Centrale Paris before spending three years as a senior machine learning engineer at Natixis, followed by a stint as CTO of Minautor, a European secondhand auto parts reseller, where he built the technical infrastructure from scratch and helped grow revenue sixfold.

Sayah came through EPFL in Lausanne, earning a bachelor’s in mathematics and a master’s in applied mathematics, then cut his teeth at an ETH Zurich spin-off and as head of research at Ponicode, a software testing startup that was acquired by CircleCI.

The two share a common thread: both won the Huawei Big Data Challenge in France, and both arrived at cybersecurity not from within the industry but from the world of applied AI, a perspective that shapes how Qevlar approaches the problem.

The company joined Station F’s AI startup program, backed by Meta, Hugging Face, and Scaleway, and quickly began stacking milestones.

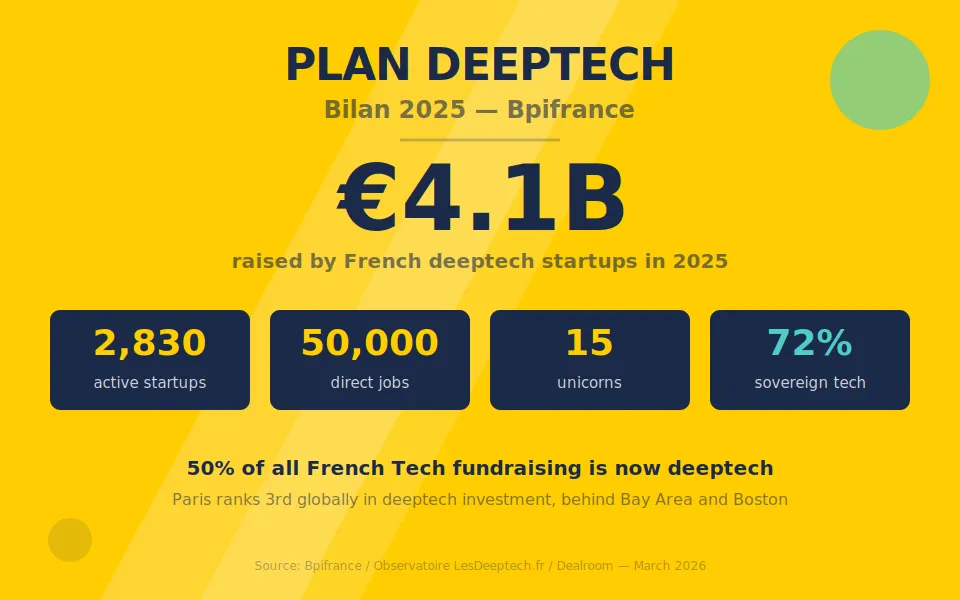

A $14 million round led by EQT Ventures and Forgepoint Capital preceded the current $30 million raise, bringing total funding to $44 million. Along the way, Qevlar picked up a string of industry recognition, including Orange Cyberdefense’s Best Partner Innovation Award, Product of the Year 2025 from MSP Today, and a Growth Award from Forum InCyber.

It was also named among the top 100 cybersecurity companies shaping the AI space by Headline. None of which would matter much if the product didn’t work. But that customer list, which now spans Orange Cyberdefense, Sodexo, MediaMarkt, Atos, GlobalConnect, and others across Europe and the U.S., suggests the traction is real.

The consistency problem

For Achchak, the answer to whether AI can be trusted with security decisions hinges on something most people don’t think about when they hear the phrase “AI-powered security”: consistency.

“The problem with the LLMs is that they are inconsistent by nature,” Achchak said. “Imagine you have a phishing campaign that targets 10,000 employees inside your organization. The issue you have is with an LLM. The LLM would probably converge to different outcomes.”

That observation matters more than it might first appear.

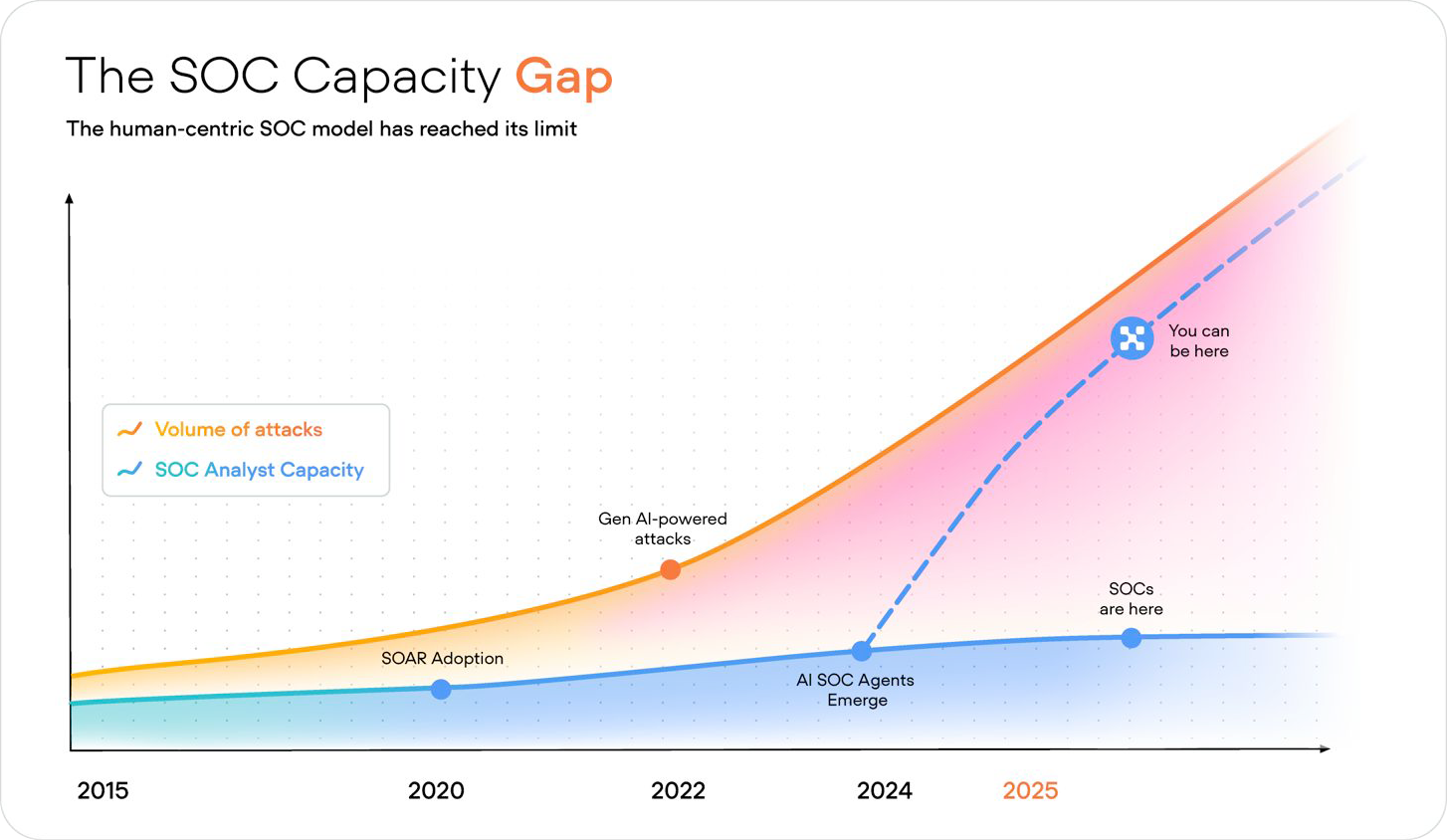

Security operations centers are the nerve centers of enterprise defense. This is where analysts sift through a relentless torrent of alerts, trying to separate genuine threats from noise. A Forrester analysis found that just three attack scenarios can trigger thousands of alerts. Gartner estimates 70% of the detection and response cycle time is spent in triage and investigation.

The capacity gap is only widening.

Beyond the brute-force approach

So the instinct to throw AI at the problem is understandable. But Achchak, who comes from a machine learning background rather than cybersecurity, is wary of the brute-force approach. Qevlar’s platform doesn’t simply pipe everything through an LLM and hope for the best. Instead, it uses what Achchak describes as a constellation of “expert systems that all collaborate together.”

“We have, for example, pure Bayesian modeling, like statistical modeling that uses classical machine learning models on some parts of the product,” he said. “We have at the core, the brain is what we call a graph AI. It represents a dynamic ontology that learns as it goes and refines its understanding of a given environment, of a given customer environment. And so all these AI models collaborate together, helping us achieve much, much better consistency, much higher level of explainability, and control our costs.”

This hybrid approach reflects a broader maturation in how companies deploy AI in high-stakes environments. And it has a direct impact on costs.

Qevlar’s systems are less expensive to run than platforms built purely on LLMs. On the customer side, the pricing is deliberately old-school in its predictability: per completed investigation, not per token or per compute time.

“From a business model perspective, what the CISO wants is something that is predictable,” Achchak said. “They want to be able to forecast that. They want to be able, from the beginning of 2026, to tell how much the tool would cost me during the whole year.”

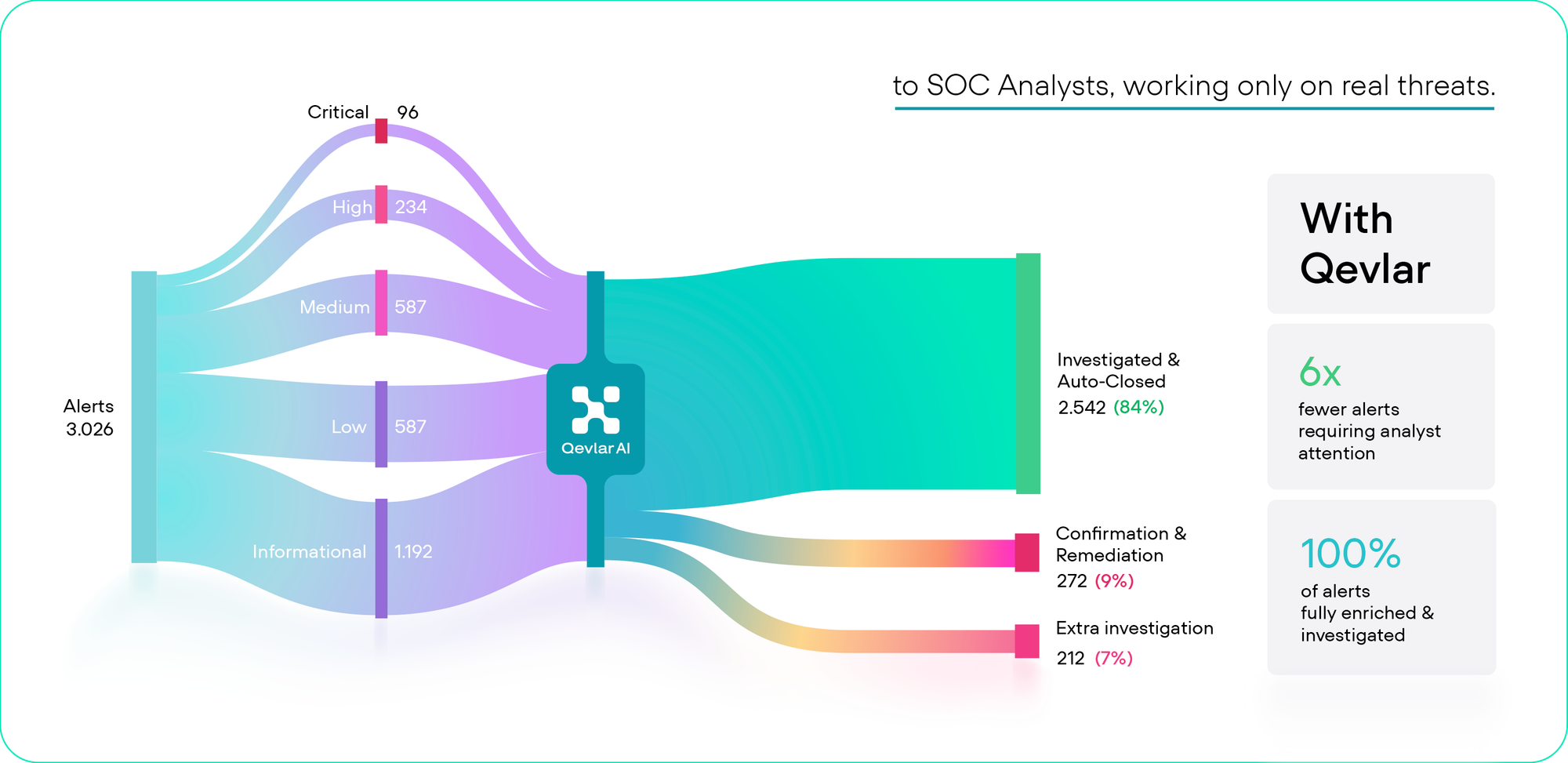

Qevlar backs that up with what it calls “proof of value” deployments. Customers benchmark the platform against competitors or their own existing playbooks before committing. Achchak claims the results have been decisive: a 10x reduction in investigation time, down to three minutes, with 100% of alerts investigated around the clock.

Scaling from Europe

The more interesting part of the story is where Qevlar wants to go next.

Qevlar currently has customers in France, Germany, the UK, Sweden, and the United States, and is building out its U.S. presence. That ambition carries its own challenges.

Achchak is candid about the fact that successful cybersecurity companies have historically come from Israel or the United States, not Europe. When asked about the company’s biggest challenge, he didn’t point to market education or competition.

“For us, it’s probably hiring,” he said. “We want people who are ready to prove the stats wrong, who will join us to serve our customers. We’re a cybersecurity company originating from Europe, and in general, successful cybersecurity companies tend to come either from Israel or from the US.”

That’s an honest assessment that hints at a larger theme in European tech: the talent bottleneck may ultimately be a bigger constraint than market access or technology.

The company has 52 people and is growing rapidly. It has the funding, the enterprise customers, and a product philosophy well-suited to a moment when the market is growing skeptical of AI tools that promise everything and explain nothing.

From firefighting to intelligence

In terms of the product, the vision is to move beyond alert investigation toward a more strategic approach.

Achchak frames the current state of most SOCs as fundamentally reactive: measuring success by how many alerts they tackle and how quickly they close them. He calls it a “firefighting approach.”

The vision is to move beyond alert investigation toward something more strategic. If an AI system is investigating every signal flowing through an organization, it should be able to correlate alerts across time, spot patterns that humans and traditional detection systems miss, and surface insights about root causes.

“If it correlates an alert from six months ago with one from today, is there any pattern that the AI is noticing that my previous detection systems are not seeing?” Achchak said. “And the answer is yes. So the question becomes: how do we package that and how do we surface additional insights beyond our ability to investigate?

It’s an ambitious leap from a tool to an intelligence layer. It will require continued product development alongside geographic expansion.

“We’re putting out the fire," he said, "and finding out what started it to make sure it doesn’t happen again.”